The Abundance Bros Notice the AI Bubble but What Is Their Narrative Leaving Out?

The AI bubble has gotten so big even the Abundance Bros are noticing, but maybe there is more to the AI narrative than meets the eye.

Maybe Sam Altman and company are playing a completely different game than can be understood using conventional economic analysis.

I’ve been writing about AI mania and how it’s driving bad decision making at Meta, Elon Musk’s web of companies, OpenAI, Oracle, Anduril, and Google (and I’m hoping to get around to similar pieces on Amazon, Microsoft, Palantir, and Anthropic).

In each of those pieces I’ve followed Ed Zitron’s skepticism of LLM business models and Gary Marcus’ skepticism of the potential of LLMs to reach AGI (Artificial General Intelligence).

Now it seems that Zitron’s and Marcus’ AI bearishness is hitting the mainstream:

And if something is getting mainstream attention, it’s going to be commented on by the clique of centrist pundits that some call the Abundance Bros and who I think of as keepers of the conventional wisdom.

The Atlantic Monthly’s Charlie Warzel has a piece called “AI is a Mass-Delusion Event” that manages to both split the baby and be quite bearish about LLMs as we know them:

What if generative AI isn’t God in the machine or vaporware? What if it’s just good enough, useful to many without being revolutionary? Right now, the models don’t think—they predict and arrange tokens of language to provide plausible responses to queries. There is little compelling evidence that they will evolve without some kind of quantum research leap. What if they never stop hallucinating and never develop the kind of creative ingenuity that powers actual human intelligence?

The models being good enough doesn’t mean that the industry collapses overnight or that the technology is useless (though it could). The technology may still do an excellent job of making our educational system irrelevant, leaving a generation reliant on getting answers from a chatbot instead of thinking for themselves, without the promised advantage of a sentient bot that invents cancer cures.

Good enough has been keeping me up at night. Because good enough would likely mean that not enough people recognize what’s really being built—and what’s being sacrificed—until it’s too late. What if the real doomer scenario is that we pollute the internet and the planet, reorient our economy and leverage ourselves, outsource big chunks of our minds, realign our geopolitics and culture, and fight endlessly over a technology that never comes close to delivering on its grandest promises? What if we spend so much time waiting and arguing that we fail to marshal our energy toward addressing the problems that exist here and now? That would be a tragedy—the product of a mass delusion. What scares me the most about this scenario is that it’s the only one that doesn’t sound all that insane.

Noah Smith is flat out asking “Will data centers crash the economy? This time let’s think about a financial crisis before it happens.”

The U.S. economic data for the last few months is looking decidedly meh. The latest employment numbers were so bad that Trump actually fired the head of the Bureau of Labor Statistics, accusing her of manipulating the numbers to make him look bad. But there’s one huge bright spot amid the gloom: an incredible AI data center building boom.

…

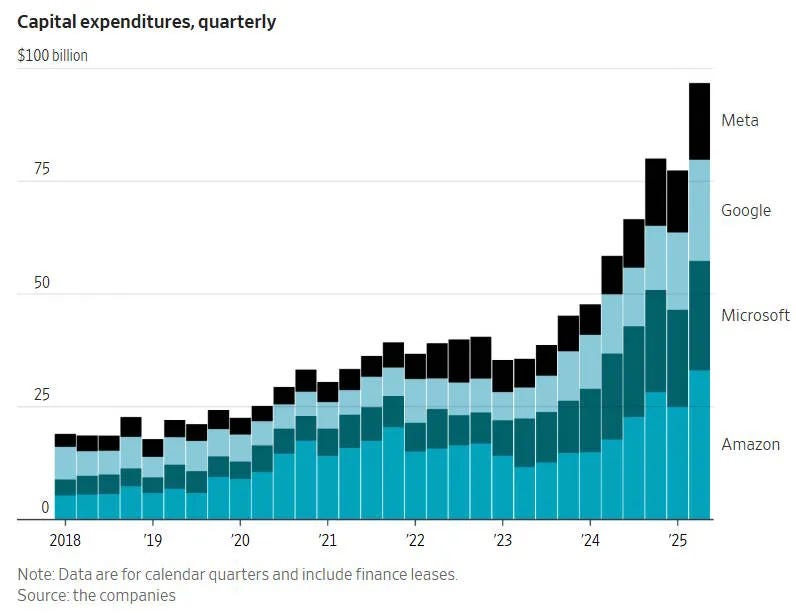

So right now, tech companies have the choice to either sit out of the boom entirely, or spend big and hope they can figure out how to make a profit.Roughly speaking, Apple is choosing the former, while the big software companies — Google, Meta, Microsoft, and Amazon — are choosing the latter. These spending numbers are pretty incredible:

For Microsoft and Meta, this capital expenditure is now more than a third of their total sales.

…

I think it’s important to look at the telecom boom of the 1990s rather than the one in the 2010s, because the former led to a gigantic crash. The railroad boom led to a gigantic crash too, in 1873 (before the investment peak on Kedrosky’s chart). In both cases, companies built too much infrastructure, outrunning growth in demand for that infrastructure, and suffered a devastating bust as expectations reset and loans couldn’t be paid back.In both cases, though, the big capex spenders weren’t wrong, they were just early. Eventually, we ended up using all of those railroads and all of those telecom fibers, and much more. This has led a lot of people to speculate that big investment bubbles might actually be beneficial to the economy, since manias leave behind a surplus of cheap infrastructure that can be used to power future technological advances and new business models.

But for anyone who gets caught up in the crash, the future benefits to society are of cold comfort. So a lot of people are worrying that there’s going to be a crash in the AI data center industry, and thus in Big Tech in general, if AI industry revenue doesn’t grow fast enough to keep up with the capex boom over the next few years.

…

So far, the danger doesn’t scream “2008”. But if you wait until 2008 to start worrying, you’re going to get 2008.

Actual “Abundance” co-author Derek Thompson isn’t quite willing to call it a bubble, but he has written a piece titled “How AI Conquered the US Economy: A Visual FAQ.”

Here’s a taste:

The American economy has split in two. There’s a rip-roaring AI economy. And there’s a lackluster consumer economy.

You see it in the economic statistics. Last quarter, spending on artificial intelligence outpaced the growth in consumer spending. Without AI, US economic growth would be meager.

You see it in stocks. In the last two years, about 60 percent of the stock market’s growth has come from AI-related companies, such as Microsoft, Nvidia, and Meta. Without the AI boom, stock market returns would be putrid.

You see it in the business data. According to Stripe, firms that self-describe as “AI companies” are dominating revenue growth on the platform, and they’re far surpassing the growth rate of any other group.

Nobody can say for sure whether the AI boom is evidence of the next Industrial Revolution or the next big bubble. All we know is that it’s happening. We can all stop talking about “what will happen if AI dominates the economy at such-and-such future date?” No, the AI economy is here and now. We’re living in it, for better or worse.

In a follow up piece, Thompson warns of “The Looming Social Crisis of AI Friends and Chatbot Therapists.”

AI engineers set out to build god. But god is many things. Long before we build a deity of knowledge, an all-knowing entity that can solve every physical problem through its technical omnipotence, it seems we have built a different kind of god: a singular entity with the power to talk to the whole planet at once.

No matter what AI becomes, this is what AI already is: a globally scaled virtual interlocutor that can offer morsels of life advice wrapped in a mode of flattery that we have good reason to believe may increase narcissism and delusions among young and vulnerable users, respectively. I think this is something worth worrying about, whether you believe AI to be humankind’s greatest achievement or the mother of all pointless infrastructure bubbles. Long before artificial intelligence fulfills its purported promise to become our most important economic technology, we will have to reckon with it as a social technology.

Thompson’s “Abundance” co-author Ezra Klein struggles with similar questions for The New York Times but in a more “aw shucks” manner and throws in the obligatory pop culture reference to show he’s got that common touch:

I don’t know whether A.I. will look, in the economic statistics of the next 10 years, more like the invention of the internet, the invention of electricity or something else entirely. I hope to see A.I. systems driving forward drug discovery and scientific research, but I am not yet certain they will. But I’m taken aback at how quickly we have begun to treat its presence in our lives as normal. I would not have believed in 2020 what GPT-5 would be able to do in 2025. I would not have believed how many people would be using it, nor how attached millions of them would be to it.

But we’re already treating it as borderline banal — and so GPT-5 is just another update to a chatbot that has gone, in a few years, from barely speaking English to being able to intelligibly converse in virtually any imaginable voice about virtually anything a human being might want to talk about at a level that already exceeds that of most human beings.

…

I find myself thinking a lot about the end of the movie “Her,” in which the A.I.s decide they’re bored of talking to human beings and ascend into a purely digital realm, leaving their onetime masters bereft. It was a neat resolution to the plot, but it dodged the central questions raised by the film — and now in our lives.What if we come to love and depend on the A.I.s — if we prefer them, in many cases, to our fellow humans — and then they don’t leave?

Thompson and Klein, in their patented speak-slow-enough-for-the-midwits-to-keep-up method, might actually be circling around what I fear is the actual use case for Large Language Models: personalized persuasion at scale.

I have a feeling Peter Thiel and Alex Karp of Palantir, for example, have read this article in Nature:

The potential of generative AI for personalized persuasion at scale

Matching the language or content of a message to the psychological profile of its recipient (known as “personalized persuasion”) is widely considered to be one of the most effective messaging strategies. We demonstrate that the rapid advances in large language models (LLMs), like ChatGPT, could accelerate this influence by making personalized persuasion scalable. Across four studies (consisting of seven sub-studies; total N = 1788), we show that personalized messages crafted by ChatGPT exhibit significantly more influence than non-personalized messages. This was true across different domains of persuasion (e.g., marketing of consumer products, political appeals for climate action), psychological profiles (e.g., personality traits, political ideology, moral foundations), and when only providing the LLM with a single, short prompt naming or describing the targeted psychological dimension. Thus, our findings are among the first to demonstrate the potential for LLMs to automate, and thereby scale, the use of personalized persuasion in ways that enhance its effectiveness and efficiency. We discuss the implications for researchers, practitioners, and the general public.

As a plodding midwit myself, I am tempted to write off the LLM boom as a financial disaster in the making and a technological dead end that will never attain its stated goal of “inventing god”, but reading the study above and watching the video below from Benn Jordan make me fear there is a different game being played by Peter Thiel, Sam Altman, Elon Musk et al.

I’m sure many of you in the Naked Capitalism community are way ahead of me on understanding this (or are smart enough to poke holes in his argument), but for me, Jordan’s insights were revelatory.

Rather than trying to make money, the aspiring AI barons are hoarding power in the form of information.

Some key points from Jordan’s video:

What if capitalism’s death wasn’t a mistake or something that the ultra wealthy were trying to avoid?

What if the pesky burden of labor laws and taxation could be avoided by deprioritizing the goal of financial profit or maybe even money itself?

…

To help properly explain post capitalism I need to remind you of the utter absurdity of just having a billion dollars.

Not only is it impossible to spend on even the most lavish things that you would desire, but it’s also very much not like having a garage full of cash.

…

Cash is only powerful when you’re broke and it’s only valuable when you need or want something that you otherwise couldn’t get without cash.

What I instead want my viewers to be concerned with is your personal levels of power or control over the things that you earn consume and trade.

Jordan then explains the private equity take over of the U.S. economy before explaining the implications of this regarding AI:

In the last 2 years AI was being shoved in my face virtually everywhere. As I’m sure you have experienced as well.

My Google searches now open a sidebar telling me about my previous search that I don’t have patience to sort out; or, if I want to make sure that a replacement vacuum hose works with my model of shop vac on Amazon, instead of searching through reviews and answers, I now have to talk to a bot named Rufus about it.

This is happening with emails, with messaging apps, image editors, everything.

I was initially really really confused by this because virtually nobody likes it. It changes familiar workflows of users which risk somebody leaving your ecosystem and it’s really expensive: just one round of training an LLM or large language model can cost over $200 million and that’s not to mention the hugely increased processing power that needs to be done every time that you query that model.

All of this is just happening automatically, just to beg us to use it. Why?

The reason that so many cloud-based companies are dumping so much money and resources into pushing this technology on everyone is to capture the part of our routine that comparison shops or researches or asks for advice or arrives at a logical conclusion about something.

If you become friends with chat GPT or Gemini or Rufus or Siri and you consult with their vast informational resources on a more personal and casual level you’re not only supplying them with the purest form of sentiment analysis but you’re helping them build a system that will further control not only your decisions on what to buy or subscribe to but what hobby you’ll take up next summer.

Not only is it influencing your decision on where to buy an engagement ring or where and when to go on vacation to propose to your partner, there’s nothing stopping it from influencing your decision on whether you should get married at all.

It would be very naive to think of digital assistance as your assistant. They are very much not there to help you but to help their owners.

…

I am personally convinced that the market will crash again and more money will be borrowed and printed that will ultimately be invested into the further transformation into a rent-based economy.

…

Unfortunately for oligarchs money can’t buy everything. Money alone can’t change laws. It can’t force people to dress the way you want them to dress or to identify in a way that aligns with how you see them.It can’t make ideologies against the law. It can’t allow you to change the race culture or ethnicity of your neighbors. You can’t buy someone else’s choice to be or not be a parent.

To graduate, or transcend from those unfathomable limits of what money’s wealth can get you, you need to control the power of information so that you can manipulate the world that you live in.

I believe that that is exactly what’s happening and unfortunately, I think it’s going to get a lot worse before it gets better.

Jordan’s thesis has explanatory value beyond “whoa, these billionaires are a bunch of dumbasses driving their industry and our economy off a cliff.”

Maybe AI is more of a power grab than an economic play, and maybe it’s even a deliberate attempt to crash the economy ala 2008 and accelerate our transition further away from a capitalist economy as we understand it and toward the ultimate rentier’s paradise.

Or, for the rest of us, the perfect dystopia.